|

|

Review: Toshiba THNSNJ960GPSZ ADVERTISEMENT

Reviewed by: J.Reynolds Provided by: Toshiba Firmware version: JZET6102 ADVERTISEMENT

|

Introduction

Welcome to Myce’s review of the Toshiba THNSNJ960PCSZ 960GB SATA

Enterprise SSD (hereafter referred to as the THNSNJ960PCSZ).

The THNSNJ960PCSZ is designed to meet the needs of the read

intensive, mixed workload, low power market segment. In this segment its

competitors include the Samsung 845 EVO, the Intel DC S3500, and the Sandisk

Cloudspeed 1000E - tough competition indeed. Please read on to find out how

Toshiba’s offering, with its second generation 19nm NAND, fairs.

This review also includes a sneak preview of the power

testing we will soon be adding to our enterprise reviews.

Market Positioning and Specification

Market Positioning

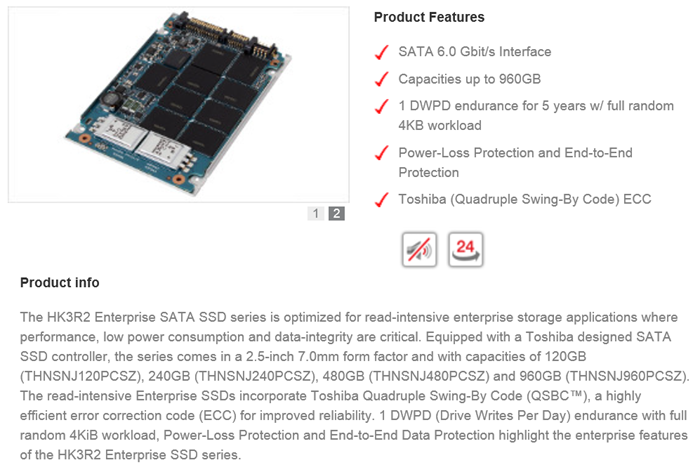

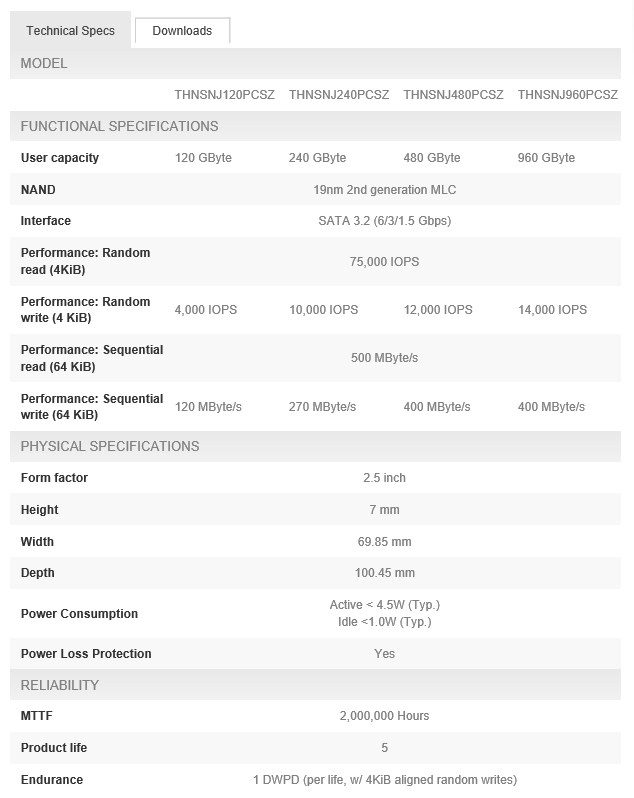

This is how Toshiba positions the THNSNJxxxPCSZ (also known

as HK3R2) series of drives –

Specification

Here is Toshiba’s specification for the THNSNJxxxPCSZ series

–

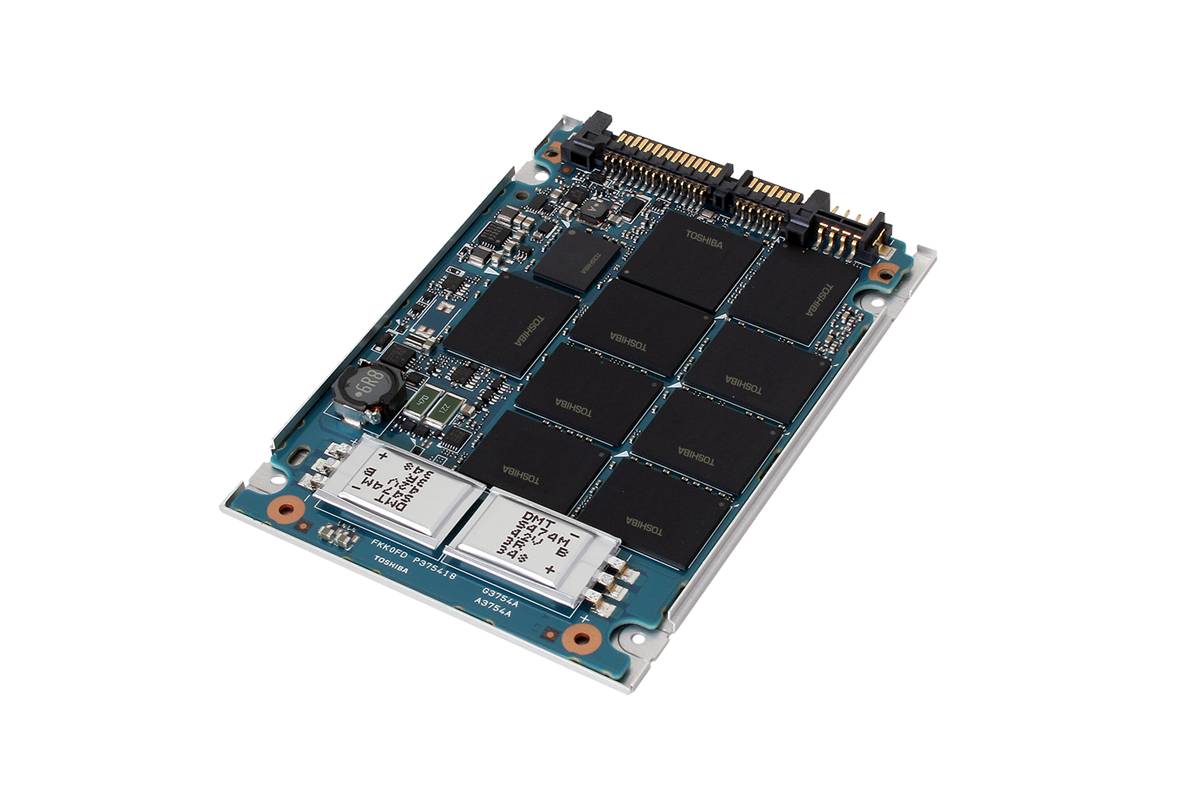

Product Image

Here are some pictures of the THNSNJ960PCSZ –

The THNSNJxxxPCSZ series uses a proprietary Toshiba

controller.

Now let's head to the next page, to look at Myce’s

Enterprise Testing Methodology.....